Coolant Distribution Manifold (CDM)

The CDMs are the distribution pipes that supply coolant to each server and collect the hotter coolant back to the CDU. Vertical manifolds are placed at the back of the rack and directly connected to the CDU through the IT coolant piping network. CDM is selected by the provided heat transfer capacity which shall be equal or more than the rack density. A normal manifold heat transfer is in the range of 100-200kW. Similarly, horizontal manifolds can be placed at the front of the rack in a 1U rack mount space. They connect the vertical manifolds at the rear of the rack to cold plates on systems with inlet and outlet hoses at the front of the rack.

Flexible hoses are used to supply and return the cold/hot liquid to the CPUs and GPUs from the manifolds. Couplings across the two ends shall be through high-performance Quick Disconnects (QD) each typically sized in the range of 4-6kW. Design of QDs is currently not controlled by any standard and the more connectors you have the higher the pressure drop. In contrast, special safety type design of QDs makes them more complicated and increases further the pressure drop. Selection of QDs and pipe sizing directly affect the system’s overall operation pressure and flow to/from the CDU.

Coolant inlet and outlet temperatures, along with total required flow rate and flow impedance, are design parameters that affect the sizing and arrangement of CDM and flexible hoses. Obviously, extremely high-pressure systems increase the risk of failure and leakage. Non-spill connectors are required with the system and liquid level sensor is recommended to be included.

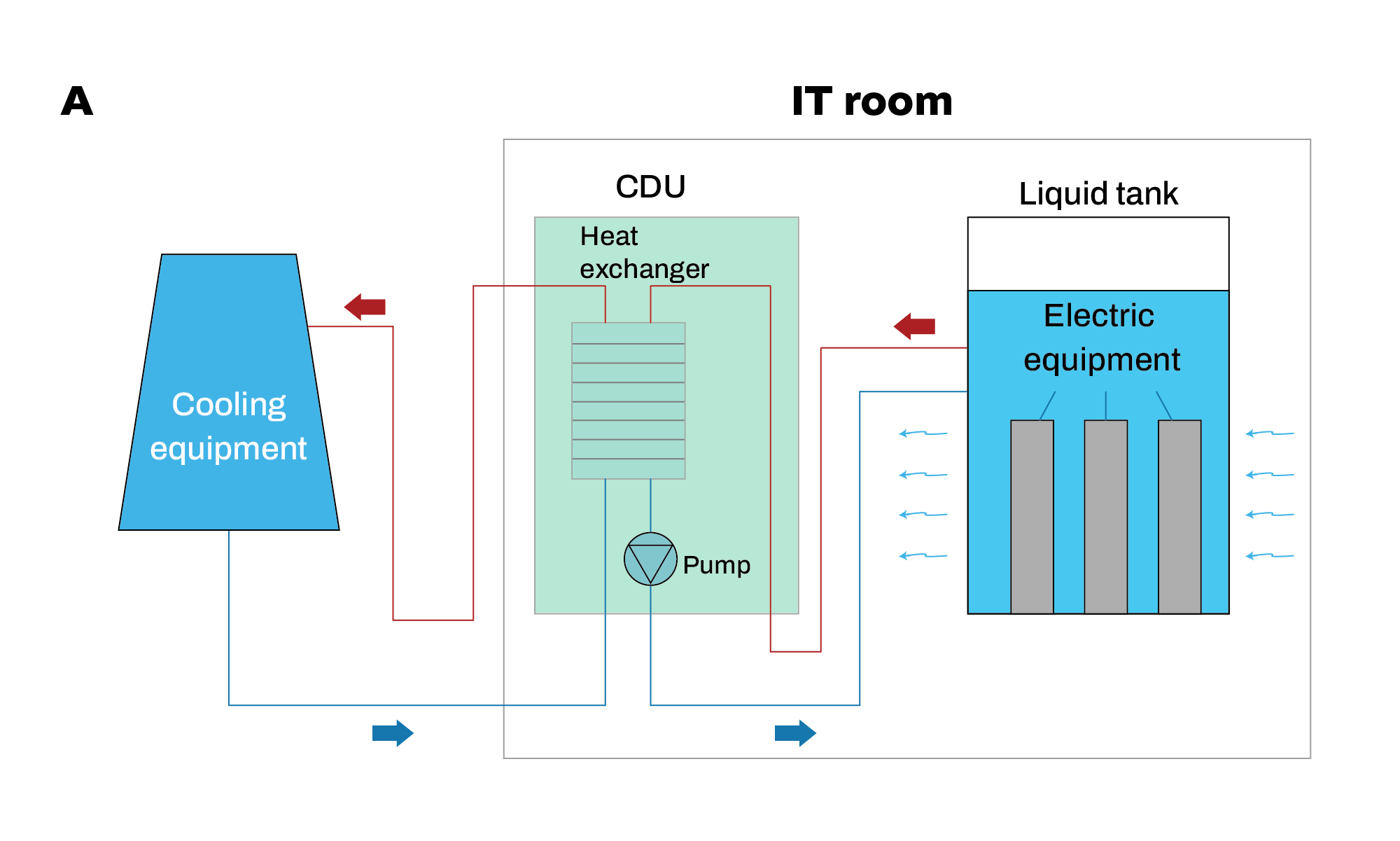

Immersion Tank

The immersion tank houses vertically mounted servers in a dielectric bath and circulates the dielectric fluid through a CDU to remove heat. Each tank is considered a module, and the size can vary from 60 to 300 kW. Part of the tank can also house single or redundant CDUs and dry zones around for direct access to the network and power (PDU) components. Arrangements of smaller size tanks can be stacked within properly designed rack units to allow smaller footprints like existing air-cooled racks. At the same time, these racks can serve as liquid containers during potential leakage.

As each tank is equipped with its own CDU, the modules can be directly connected to the facility water network through provided flow and return piping connections without any further intermediate unit. The liquid used for immersion cooling is non-conductive and non-corrosive, so it may be used with electronic components.

Each immersion cooling tank is standalone and compact, easy to deploy, especially for environments where the servers will be in a confined space without a data center infrastructure. However, traditional DC facilities can also fit within complex layouts and connect to a standard water loop. Immersion cooling tanks can provide AC or DC power supply (external rectifiers required), covering like this new market demand for DC-powered ITE.

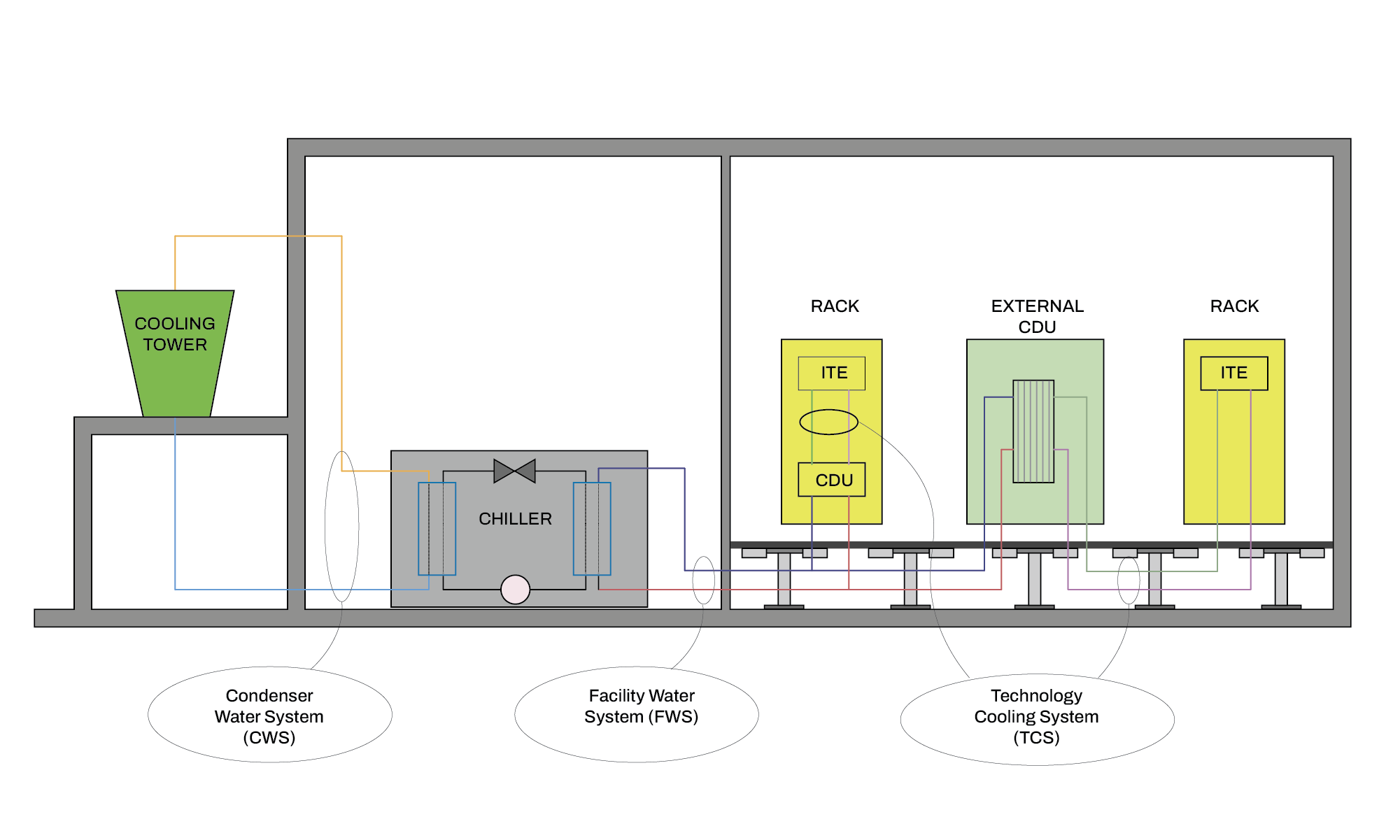

Heat Rejection Unit

Heat rejection is through existing or new specific-sized dry coolers, air-cooled chillers, or hybrid coolers. The selection of the right type of heat rejection unit is based only on the external environment’s temperature range. Ambient temperatures of northern climates allow the use of conventional dry coolers, while for higher temperatures, hybrid solutions with water evaporation like cooling towers, is required. In areas where the ambient temperatures are very high and water is scarce, air-cooled refrigerant-based chillers operation is essential to support heat removal.

Using existing dry coolers or cooling towers for final heat rejection may be possible, but these systems often require modification to support liquid cooling. For example, depending on the location of the facility, the dry cooler may require an adiabatic assist to maintain the lower supply temperatures required for liquid cooling throughout the year.